The tool is live!

In November 2014 I announced that within the Software Engineering Research Group of Delft University of Technology we were building a tool that is based on a so-called Cost/Duration Matrix. I am proud and happy at the same time to let you know that the Software Project Benchmark tool is live!

The Software Project Benchmark tool is a spin-off of the Software Engineering Research Group of Delft University of Technology.

An open source solution

The Software Project Benchmark tool is an open source solution. We are convinced of the importance of IT-organizations to get more attention for evidence-based portfolio management. Therefore, our tool is available for free and anyone can use the research data that’s collected by us. The Software Project Benchmark is a safe tool to use; we do not store any data of the users and the data that is uploaded to the tool. The results of the benchmark are not recognized, used or stored by us. In result we ask the users of the tool to make us happy by letting us know what their experiences with the tool are and where they see room for improvement.

The tool itself is built in R, a language and environment for statistical computing and graphics that’s been widely used in scientific environments. R provides a wide variety of statistical and graphical techniques, and is highly extensible. The R language is often the vehicle of choice for research in statistical methodology, and R provides an Open Source route to participation in that activity.

It’s a ßeta version

The Software Project Benchmark tool is a beta version of a hopely more mature benchmark tool that we plan to publish in the future. Therefore the available functionality is limited. For example; we have no print function yet and we apologize upfront for any unforeseen error. But we thoroughly tested the benchmark functionality and the underlying calculations and the tool is a result of long and thorough research.

Spin-off of scientific research

An important feature of the Software Project Benchmark tool is the availability of a large research repository, including data of almost 400 finalized software projects, which is the source for the benchmark functionality.

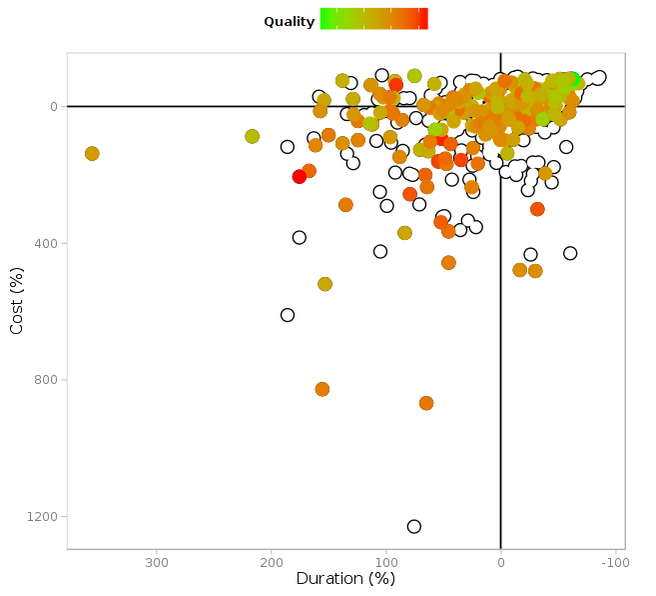

With the Software Project Benchmark tool everybody can compare the project cost, the duration of a project and the delivered quality of a subset of their own completed software projects with that of projects in our research dataset. The tool provides an overview of the performance of an organization, measured on a software project portfolio as a whole. This makes it an excellent tool for stakeholders in software portfolio management to gain insight into those aspects in which their own organization excels and also the potential for improvement in the IT portfolio.

The Software Project Benchmark tool offers end-users functionality to build a template-based input-file with project data that is collected and measured within their own company, analysis and benchmark their data against our research data set, set peer group definitions for comparison and adjust configuration settings.

At the moment we are busy developing new research ideas that we can build upon the tool. To give you an idea of what we are working on: we are curious on how to enrich our model with quantified information about value. In our current model we noticed that software projects that end up in the so-called Bad Practice quadrant (they scored worse than average for both cost and duration) might represent a high amount of value for the company. Or the other way around: a project ending up as Good Practice might not be good for any value at all. Keep you posted on this in future blogposts!

Try it out

But for now, start the Software Project Benchmark tool yourself! Just try it out. Nothing can go wrong, It’s perfectly safe and you can fool around as long as you like. But please tell us about your experiences! Did you like the tool? did you hate it? Do you think measurement sucks? Can we improve things? We’d like to know! Just leave a reply below this post.

Have fun!

www.softwareprojectbenchmark.nl

About the authors

Hennie Huijgens is a creative and driven information management specialist with broad experience in all aspects of IT performance measurement, business innovation, project management and IT metrics. He is the owner of Goverdson. Goverdson’s core competence is to help software companies to exceed in effective and efficient delivery of innovative IT-solutions.

Georgios Gousios is assistant professor at the Digital Security Group of Radboud University Nijmegen. His primary research interests are software engineering, software analytics and programming languages.